Progetto INFN-GRID La Grid: una nuova soluzione per il calcolo degli esperimenti dell INFN...

-

Upload

dino-lentini -

Category

Documents

-

view

219 -

download

0

Transcript of Progetto INFN-GRID La Grid: una nuova soluzione per il calcolo degli esperimenti dell INFN...

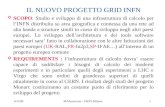

progetto INFN-GRID

La Grid: una nuova soluzione per il calcolo degli

esperimenti dell’ INFN

a.ghiselli

Infn-Cnaf, Bologna

Cagliari, 13 settembre 2000

Contenuto: introduzione Elementi architetturali Testbed & Network

INFN-GRID

• Progetto 3-ennale• Partecipazione degli esperimenti di LHC:

Alice, Atlas, CMS, LHCb e di altri esperimenti e progetti quali Virgo e APE.

• Partecipazione del servizio calcolo delle sezioni e del cnaf

• Workplan suddiviso in 5 Work Packages (complementare e di supporto a DATAGRID)

workplan• WP1, evaluation of Globus toolkit, M.Sgaravatto, F.Prelz

• WP2, integration with DATAGRID and adaptation to the national grid project:– WP2.1 workload management, C.Vistoli, M.Sgaravatto– WP2.2, data management, L.Barone, L.Silvestris– WP2.3, application monitoring, U.Marconi, D. Galli– WP2.4, computing fabric & mass storage, G.Maron,

M.Michelotto

• WP3 Applications and Integration:– Alice: R.Barbera, Castellano, P.Cerello– Atlas: L.Luminari, B.Osculati, S.Resconi – CMS: G.Bagliesi, C.Grandi, I.Lippi, L.Silvestris– LHCb: R.Santacesaria, V.Vagnoni

• WP4 National Testbed, A.Ghiselli, L.Gaido

• WP5 network , T.Ferrari, L.Gaido

Gestione del progetto

Collaboration Board

Technical Board Executive Board

Per sito: coordinatore locale,rappresentante per ogni esperimento+ EB, TB

Coordinatore tecnicoCoordinatori dei WP

Esperti delle applicazioni

Project managerCoordinatori del calcolo degli esperimenti

Coordinatore tecnicoRappresentante INFN in DATAGRID

Struttura INFN tot. Fte

BA 6,70BO 8,70CA 2,40CNAF 6,30CS (G.c. LNF) 0,60CT 8,30FE 1,00FI 3,30GE 2,10LE 5,00LNF

LNL 2,90MI (infn+lasa) 2,80NA 4,40PD 8,90PG 2,70PI 6,30PR (G.c. MI) 0,40PV 1,25ROMA I 8,40ROMA II 0,90ROMA III 0,80SA (G.c. NA) 1,80TN (G.c. PD)

TO 6,50TS 4,00UD (G. c. TS) 0,25Virgo

96,70

La Grid

E’ un sistema costituito da: •risorse di calcolo, server di dati, strumenti …distribuiti geograficamente e accessibili attraverso una rete molto efficiente, •un software che fornisce una interfaccia uniforme e garantisce un utilizzo delle risorse in modo efficiente e capillare.

Il software di Grid è un set di servizi

per- Obtaining information about grid components- Locating and scheduling resources- Communicating- Accessing code and data- Measuring performance- Authenticating users and resources- Ensuring the privacy of communication ….

- From “The GRID” edited by I.Foster and C.Kesselman

Modello di ‘task execution’ sulla Grid

Client b

Client c

Client a

Site 1

Site 2 Server B

DirectoryService

Server C

Server D

Scheduler Monitor

WAN

(0)

(0) Monitor serverand network

Statusperiodically

(1)

(2)

(3)

(4)

(1) Query server(2) Assign server(3) Request exec.(4) Return computed result

Perché la grid ora?• Caratteristiche del calcolo degli

esperimenti di LHC:– Collaborazioni molto numerose e

distribuite geograficamente– Elevata quantità di dati da analizzare

• Risorse di calcolo distribuite: – Disponibilità di cluster di PC con elevata

capacità di calcolo

• Reti: – affidabilità, alta banda locale e geografica,

servizi QoS

Evoluzione della tecnologia WAN

– Bandwidth(bps) 2M(89), 34M(97), 155-622bps,

2.5-10-40Gbps– Transm. Tech. , Digital H., SDH/Sonet, ATM,

PoS, Optical networks/DWDM – Per hop latency ~ from hundred of msec to

few msec – TCP, UDP, RTP, reliable multicast … over IP– Fault tolerance and QoS support– Driving applications: telefony, www, videoconf.,

video on demand , true distributed applications like distributed computing, online control of scientific instrument , teleimmersion…. GRID

i mattoni per passare dalla rete di trasmissione dati alla rete di servizi di calcolo sono disponibili !

Grid architecture

• What is involved in building a grid?– Users– Applications– Services– resources (end systems, network, data

storage, …)

class purpose Make use of concerns

End user Solve problems applications Transparency performance

Application developers

Develop applications

Programming models and tools

Ease of use, performance

Tools developers

Develop tools Programming models

Grid services Adaptivity, performance, security

Grid developers

Provide basic grid services

Local system services

simplicity, connectivity, security

System administrators

Manage grid resources

Management tools Balancing local and global concerns

GRID USERS

HEP computing

Key elements:- Data type and size per LHC exp.: RAW(1MB per

event, 1015B/year/exp.) , ESD(100KB per event), AOD(10KB per event)

- Data processing: event reconstruction, calibration, data reduction, event selection,analysis, event simulation

- Distributed, hierarchical regional centers architecture: Tier 0 (CERN), Tier 1, …desktop

- large and distributed user community

HEP computing cont.

Applications classes:1) Traditional applications running on single

computer and with local I/O2) Scheduled Client/Server applications running

on multiple computers and with local or remote I/O

3) Interactive Client/Server applications 4) Grid oriented applications integrated with

grid services

Global Grid Architecture

Grid Fabric

Layer

Applications

Comm. protocolsData servers

Remote monitoring

PC-ClusterSchedulers

Network Services (QoS)Optical technology

Grid ServicesLayer

Resource and appl.Independent services

Information Resource mgmt (GRAM)

Security (GSI) Data access Fault detection

. . .

Batch traditionalApplications

Client/ServerInteractive Appl.

Client/ServerBatch Applications

Grid enabled Applications

ApplicationToolkit Layer

HTBData Mover Monitoring

tools

workloadmanagement

Applicationmonitoring

Data management

WP1

WP2.1WP2.2

WP2.3

WP2.4WP5

WP3

TESTBED & NETWORK Workpackage

• The INFN-GRID TESTBED WP has the mandate to plan, organize, and enable testbed for end-to-end experiments applications.

• It will be based on the computing infrastructures set-up by the INFN community in the years 2001-2003 for the needs of the experiments which will exploit the GRID.

• these infrastructures will be mainly the Tier1-

Tier3 Regional Centers prototypes for the LHC experiments.

• It will be based on an high performance NETWORK planned by the WP 5 with the bandwidth and the services required by the experiments applications (WP3).

• The INFN GRID testbed will be connected to the

European testbed and through it to the other national grids participating to the European project.

cont.

cont.

• The project activities will provide a layout of the necessary size to test in real life the middleware, the application development, the development of the LHC experiment computing models during this prototyping phase and the necessary support to LHC experiment computing activities

• The national testbed deployment will be synchronised with DataGrid project activities.

Testbed baselines

• Data intensive computing: to address the problem of storage of a large amount of data

• Hierarchical Tier-n Regional Centers model as recommended by Monarc will be prototyped and tested.

• Production oriented testbed: straightforward demonstrator testbeds for:– prototyping and – continuously prove the production capabilities of the

overall system; – advanced testbeds which stretch the system to its

limits.

Cont.

• Real goals and time-scales: – "real Experiment applications". – By the end of year 2003, all the LHC Experiments

have to have a "reasonable-scaled" (order of 10%)to proceed to final "full scale" implementation.

• Integrated system: efficient utilization of the distributed resources , integrated in a common grid, while maintaining the policies of the experiments.

• MONARC simulation tool in the design and optimization of the computing infrastructure, both local and distributed.

Experiments’ capacity in Italy

2001 2002 2003

cms 8000 SI95, 21 TB

16000 SI95 40 TB

32000 SI95, 80 TB

atlas 5000 SI95, 5 TB

8000 SI95, 10 TB

20000 SI95, 20 TB

alice 6800 SI956,4 TB

15000 SI9512 TB

23000 SI9522 TB

virgo ……

…….

Gerarchia dei Centri Regionali

CERNTier 0

Tier 1

Tier 2

Tier 3

Tier 4

France Italy 2.5Gbps UK Fermilab

Tier2 center

sezione sezione sezione

2.5Gbps

622Mbps

622Mbps

2.5Gbps

desktop

100Mbps-1Gbps

HEP computing : GRID Layout Model

Data ServerData Server

Data Server

CPU Server

CPU Server

CPU Server

desktop

CPU Server

Multi-level RC hierarchy

desktop

desktop

desktop

CERNDATAGRID

CPU Server

Tier 1

Tier 2

Tier 3-4

GARR-B Topology

155 Mbps ATM based Network

access points (PoP)

main transport nodes

TORINO PADOVA

BARI

PALERMO

FIRENZE

PAVIA

GENOVA

NAPOLI

CAGLIARI

TRIESTE

ROMA

PISA

L’AQUILA

CATANIA

BOLOGNA

UDINE

TRENTO

PERUGIA

LNF

LNGS

SASSARI

LECCE

LNS

LNL

USA

155Mbps

T3

SALERNO

COSENZA

S.Piero

FERRARA

PARMA

CNAF

ROMA2

cern

Regional Centers CMS primary T.1 T2/3 Atlas primary T.1 T2/3 Alice primary T.1 T2/3 LHCb T.1 T2/3

600Mbps

Mappa dei siti per i prototipi dei RC

MILANO

Testbed progressively growingfrom quantum to production Grid

ATLAS

INTEGRATIONQuantumGRID

TESTBED 1

GRIDCMS ALICE

ATLAS LHCb

VIRGO

TIME

CO

MP

LE

XIT

Y

CMS

ALICE

LHCb

VIRGO

APEARGOCOMPASS

??

DeliverablesDeliverable Description Delivery Date

D1.1 Widely deployed testbed of GRID basic services 6 months

D1.2 Application 1 (traditional applications) testbed

12 months

D1.3 Application 2 (scheduled client/server applications) testbed

18 months

Application n (cahotic client/server applications) testbed

30 months

D1.4 Grid fabric for distributed Tier 1 24 months

D1.5 Grid fabric for all Tier hierarchy 36 months

MilestonesMilestone

Description Date

M1.1 Testbeds topology 6 Months

M1.2 Tier1, Tier2&3 computing model experimentation

12 Months

M1.3 Grids prototypes 18Months

M1.4 Scalability tests 24 Months

M1.5 Validation tests 36 Months

Common resources2000 2001 2002 2003

3 PC * 26 ----

Upgraded Interfaces on routers

----

L2 GE switch -----

VPN layout ----- ------ --------

------------------------

Testbed network layoutdescription 2000 2001 2002 2003

Upgraded access on production net.

------

VPN at 34Mbps -----

VPN at 155Mbps ------

VPN at 155+622+ 2.5Gbps

--- ------

Testbed participants

• layout setup coordinators(computing resources + network layout)

• WP participants• application developers• Users>Total 20 FTE

What we are ready to do

• To interconnect Globus-based grids• Globus commands (globus-run,…)• Globus tools (gsiftp,…..)• GARA tests……

Testbed resources• Now

– small PBS PC-cluster and SUN-cluster (cnaf)– WAN condor pool (about 300 nodes)

• By the end of 2000: quantum grid– More sites with PBS and LSF clusters– More PCs in the clusters

• Spring 2001– Experiment computing power